If nobody reports near-misses, your site isn’t perfect. It means speaking up feels unsafe. Silence is a leading indicator of operational risk. Delayed reporting is one of the most expensive and preventable risks on any jobsite. Measuring Psychological Safety requires a systematic playbook rather than a consultant. Here are 8 field-ready methods to assess crews and interpret results by crew or shift. Start by redefining psychological safety for a field or plant environment.

1. Measuring Psychological Safety as a Practical Field Metric

A quiet jobsite often masks operational risk. If your crew stays silent, they aren’t protected. Psychological safety is the shared belief that speaking up won’t trigger punishment, ridicule, or career damage.

In a frontline context, this translates to three critical behaviors:

- Reporting injuries early before they escalate.

- Logging near misses or “good catches” to identify process flaws.

- Raising production pressure conflicts when pace compromises safety.

Run a fast diagnostic: what would a worker avoid if they didn’t trust their lead? Common jobsite examples include:

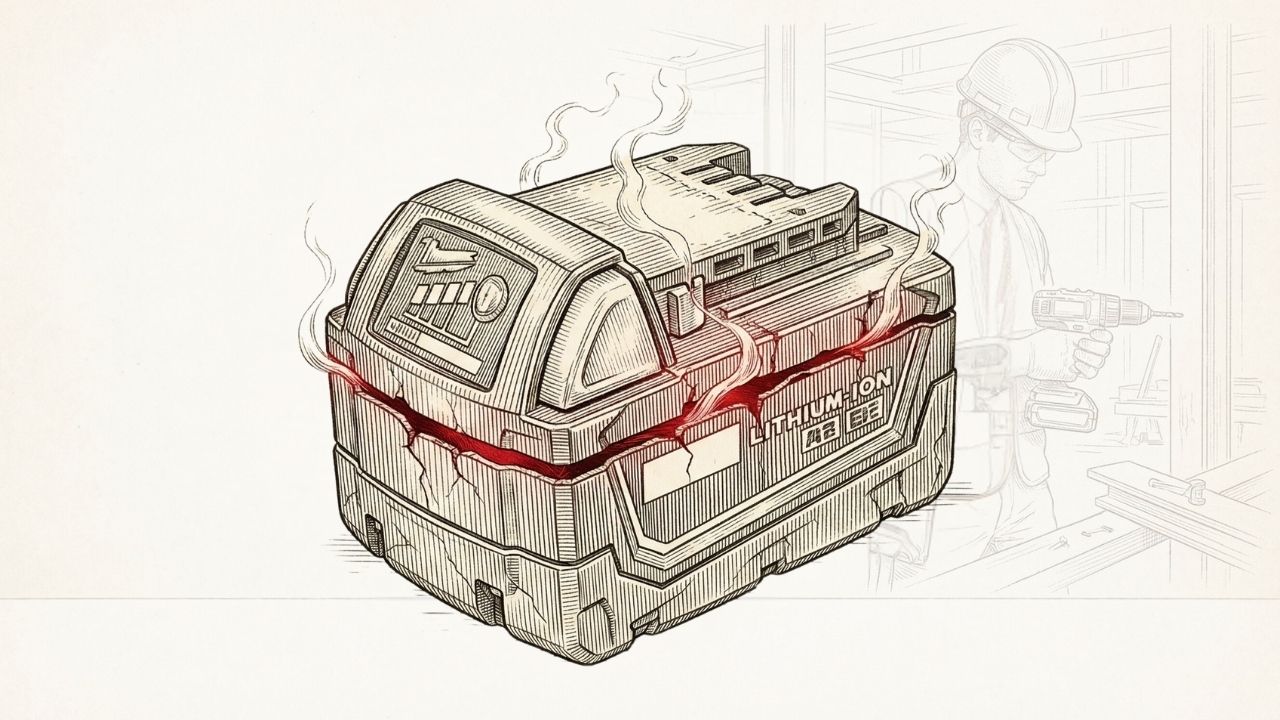

- Hiding equipment damage.

- Concealing “minor” cuts or injuries.

- Bypassing safety protocols to hit a quota.

If these conversations aren’t happening, your culture is the bottleneck. Your intent doesn’t matter; their perception is the only reality.

2. Audit Your Operational Data for Stealth Risk

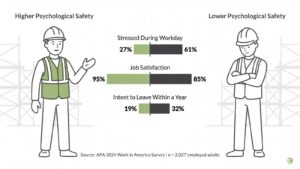

Reporting delay is a risk multiplier. The American Psychological Association’s 2024 Work in America Survey found that nearly half of U.S. workers experience lower psychological safety on the job, and those workers are more than twice as likely to feel tense or stressed during a typical workday. Stress suppresses reporting. Crews that sit on information inflate your liability. You don’t need a survey to find these gaps; you need a dashboard.

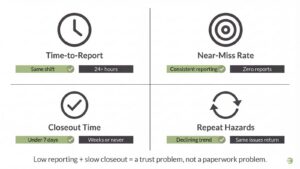

Monitor these four signals to identify where trust is breaking down:

- Time-to-report: The lag between an incident occurring and the first report submission.

- Near-miss rate: Near misses per 10k hours. Watch for “too good to be true” zeros that signal a fear of punishment.

- Closeout time: The delay between report submission and verified corrective action.

- Repeat hazards: Recurring issues that suggest the crew believes reporting is futile.

Low reporting paired with slow closeout signals a trust problem, not a paperwork one. Check out this blog that talks about 9 Moves to Turn Near-Miss Reports Into Real Risk Reduction →

3. Deploy a Research-Backed “Field Trust” Survey

Most EHS programs suffer from “homebrew survey noise” that makes measuring psychological safety difficult. To get actionable insights, use Amy Edmondson’s 7-item scale alongside three EHS-specific probes.

Ask employees to rate these statements (note: some require reverse-scoring) on a 5-point scale:

- Mistakes aren’t held against you.

- Tough issues can be brought up.

- Differences are accepted.

- It is safe to take risks.

- It is easy to ask for help.

- Efforts aren’t deliberately undermined.

- Unique skills are valued.

- Near-miss follow-up is fair.

- Injury reporting doesn’t impact overtime or future assignments.

- Supervisors treat employee and contractor reports equally.

Collect only “safe” tags like crew/shift, role (employee/contractor), and tenure band to ensure anonymity. When scoring, focus on team-level averages rather than individual data.

Flag “fear of consequence” items as red-alert leading indicators. The final deliverable is a ranked list of crews by trust, providing a credible, no-consultant instrument to identify where the silence is loudest.

4. Establish Survey Legitimacy to Avoid the “Trust Trap”

Measurement fails if workers assume the survey is a trap. If staff suspect HR is tracking “troublemakers,” they will provide safe answers while operational risks stay buried. Legitimacy must precede mechanics.

Set expectations early. Explicitly state this is not a performance review tool or a “name and shame” list. Protect participation with strict anonymity rules:

- Group Thresholds: Never report data for segments smaller than five people.

- Verbatim Scrubbing: Delete comments that identify specific trades, seniority, or locations.

- External Hosting: Use third-party tools to distance collection from internal IT.

- Hard Deadlines: Promise a specific timeline: “We will share results by X date and implement two fixes by Y date.”

If previous reports triggered “witch hunts,” you cannot survey your way out of that cycle. Fix the response system before seeking more feedback.

5. Isolate “Pockets of Fear” Through Data Variance

Company-wide averages are vanity metrics that mask operational risk. Look for variance to find the real signal. Compare Crew A vs. Crew B or night vs. day shifts. Silence in one group reveals a “pocket of fear.”

Build these outputs to turn data into a targeting mechanism:

- Risk Heat Map: Map crews by shift. Target “Red Zones” where low safety scores overlap with high-exposure work like line breaks or hot work.

- Top 3 Fear Drivers: Identify why teams stay silent using reporting-fear add-ons and comment themes.

Prioritize teams where silence creates the highest physical risk. This shifts Measuring Psychological Safety from a sentiment check to a high-leverage safety tool.

6. Track Feedback Loop Responsiveness as a System Metric

Every ignored near miss trains the workforce to shut up. In high-risk environments, measuring psychological safety is less about leader speeches and more about what happens after a report. If your inbox is a black hole, people stop talking.

Treat responsiveness as a system metric, not a personality trait. Track these KPIs:

- Time-to-acknowledge (Initial receipt)

- Time-to-first action (Risk containment)

- Time-to-close (Verified fix)

Audit the quality to avoid BBS failures where feedback is punitive or performative. Verify:

- The reporter was thanked.

- Learning was shared with the crew.

- Response was consistent across everyone.

This standardizes a leader-controlled indicator of trust. It predicts whether workers report hazards early or wait until they become serious.

7. Use Observable Proxy Metrics as “Smoke Alarms”

Surveys are easily gamed when measuring psychological safety. In low-trust environments, use observable “smoke alarms” to find the truth. A major red flag is perfect audit compliance paired with zero voluntary voice. This pattern indicates workers are checking boxes to avoid trouble instead of managing risk.

Monitor these four behavioral signals:

- Huddle Participation: Track who speaks versus who stays silent during JSAs.

- Stop-Work Frequency: Is stop-work authority actually exercised or just a policy on a poster?

- Suggestion Volume: The number of proactive improvement ideas per crew monthly.

- Narrative Richness: Compare one-line “all good” notes against detailed learning reports.

Field tip: If one person dominates planning, you are measuring hierarchy instead of hazard awareness.

8. Quantify the “Cost of Silence” for Executive Buy-In

A quiet jobsite is an expensive liability. Research consistently links low psychological safety to higher claim costs, longer recovery times, and increased litigation risk. Shift the executive conversation from abstract culture to concrete metrics like claims friction and operational drag.

Estimate your “cost of silence” by multiplying late reports by severity uplift. Factor in the costs of downtime, rework, and investigation friction. This transforms psychological safety into a systematic risk management strategy.

Run a 30-day action loop to prove value. Implement fixes like faster acknowledgment SLAs, simplified near-miss submissions, or supervisor coaching. Publicly report changes using a “you said / we did” framework and re-pulse at day 30 to compare trend lines by shift.

Turn Near-Misses Into Culture-Changing Learning Moments with our Free Near-Miss Debrief Facilitator →

Frequently Asked Questions (FAQ)

1. What is a “good” psychological safety score and how do I handle a “bad” one?

Avoid the trap of false precision by chasing a specific numerical target. Focus instead on rank-ordering your teams and tracking long-term trends. A score is a diagnostic tool, not a grade. If you see low marks on items like “mistakes are held against you” or “it is hard to ask for help,” treat these as immediate intervention triggers. Always pair these numbers with one or two qualitative prompts so you know exactly which process or behavior to fix.

2. How often should we measure psychological safety in a plant or field environment?

Start by establishing a baseline for every crew and shift. Conduct a 30 day re-pulse after you implement visible fixes to verify that the changes actually moved the needle. Once the system stabilizes, move to quarterly pulses to maintain a high-resolution view of the culture. You must increase this frequency during periods of high volatility, such as a change in supervisors, a new contract award, or a major shift schedule overhaul.

3. How can we measure small crews without compromising worker anonymity?

To solve the anonymity paradox, never report data for groups smaller than five people. Instead, aggregate data across comparable crews or entire shifts to protect individual identities. Use repeated micro-pulses over time and stick to theme-only reporting for open-ended comments. You can also supplement this data with proxy metrics such as stop-work usage, the speed of hazard closeouts, and the distribution of voice share during morning huddles.

4. Can we just add a single question to our existing employee engagement survey?

One item is usually too blunt to provide actionable data. Use a short, validated set of seven items paired with two or three probes specifically targeting the fear of reporting. Remember that engagement is not the same as speak-up safety. Engagement measures satisfaction and loyalty, while psychological safety measures candor and the willingness to highlight risk. Do not confuse a happy, quiet crew with a safe one.

5. How does this connect to ISO 45001 and ISO 45003 expectations?

These standards require organizations to manage psychosocial risks and lead with proactive indicators. Keep your approach practical by measuring leading indicators like voice and leadership responsiveness alongside lagging outcomes like injury rates. Ethical handling of responses and strict confidentiality are non-negotiable for compliance. Following this systematic measurement playbook ensures you meet ISO expectations without drowning the front line in unnecessary paperwork.

6. How does measuring psychological safety connect to OSHA’s anti-retaliation requirements?

Section 11(c) of the OSH Act prohibits retaliation against workers who report hazards, file complaints, or exercise any safety right. Measuring psychological safety creates documented evidence that your organization actively monitors and protects the reporting environment. If an 11(c) complaint surfaces, a track record of trust measurement, corrective action, and transparency demonstrates good faith. Ignoring the reporting climate does the opposite.

Sources: OSHA, American Psychological Association